When data is messy

There’s a story I tell in my book because it’s a great illustration of how AI gets the wrong idea about what problem we’re asking it to solve:

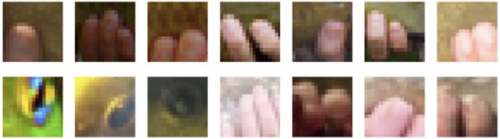

Researchers at the University of Tuebingen trained a neural net to recognize images, and then had it point out which parts of the images were the most important for its decision. When they asked it to highlight the most important pixels for the category “tench” (a kind of fish), this is what it highlighted:

Human fingers against a green background!

Why was it looking for human fingers when it was supposed to be looking for a fish? It turns out that most of the tench pictures the neural net had seen were of people holding the fish as a trophy. It doesn’t have any context for what a tench actually is, so it assumes the fingers are part of the fish.

The image-generating neural net in ArtBreeder (called BigGAN) was also trained on the same dataset, called ImageNet, and when you ask it to generate tenches, this is what it does:

The humans are much more distinct than the fish, and I’m fascinated by the highly exaggerated human fingers.

There are other categories in ImageNet that have similar problems. Here’s “microphone”.

It’s figured out about dramatic stage lighting and human forms, but many of its images don’t contain anything that remotely resembles a microphone. In so many of its training pictures the microphone is a tiny part of the image, easy to overlook. There are similar problems with small instruments like “flute” and “oboe”.

In other cases, there might be evidence of pictures being mislabeled. In these generated images of “football helmet”, some of them are clearly of people NOT wearing helmets, and a few even look suspiciously like baseball helmets.

ImageNet is a really messy dataset. It has a category for agama, but none for giraffe. Rather than horse as a category, it has sorrel (a specific color of horse). “Bicycle built for two” is a category, but not skateboard.

A huge reason for ImageNet’s messiness is that it was automatically scraped from images on the internet. The images were supposed to have been filtered by the crowdsourced workers who labeled them, but plenty of weirdness slipped through. And horribleness - many images and labels that definitely shouldn’t have appeared in a general-purpose research dataset, and images that looked like they had gotten there without the consent of the people pictured. After several years of widespread use by the AI community, the ImageNet team has reportedly been removing some of that content. Other problematic datasets - like those scraped from online images without permission, or from surveillance footage - have been removed recently. (Others, like Clearview AI’s, are still in use.)

This week Vinay Prabhu and Abeba Birhane pointed out major problems with another dataset, 80 Million Tiny Images, which scraped images and automatically assigned tags to them with the help of another neural net trained on internet text. The internet text, you may be shocked to hear, had some pretty offensive stuff in it. MIT CSAIL removed that dataset permanently rather than manually filter all 80 million images.

This is not just a problem with bad data, but with a system where major research groups can release datasets with such huge issues with offensive language and lack of consent. As tech ethicist Shannon Vallor put it, ”For any institution that does machine learning today, ‘we didn’t know’ isn’t an excuse, it’s a confession”. Like the algorithm that upscaled Obama into a white man, ImageNet is the product of a machine learning community where there’s a huge lack of diversity. (Did you notice that most of the generated humans in this blog post are white? If you didn’t notice, that might be because so much of Western culture treats white as default).

It takes a lot of work to create a better dataset - and to be more aware of which datasets should never be created. But it’s work worth doing.

AI Weirdness supporters get a gallery of a few of my favorite BigGAN image categories as bonus content. Or become a free subscriber to get new AI Weirdness posts in your inbox!

My book on AI is out, and, you can now get it any of these several ways! Amazon - Barnes & Noble - Indiebound - Tattered Cover - Powell’s - Boulder Bookstore