The Visual Chatbot

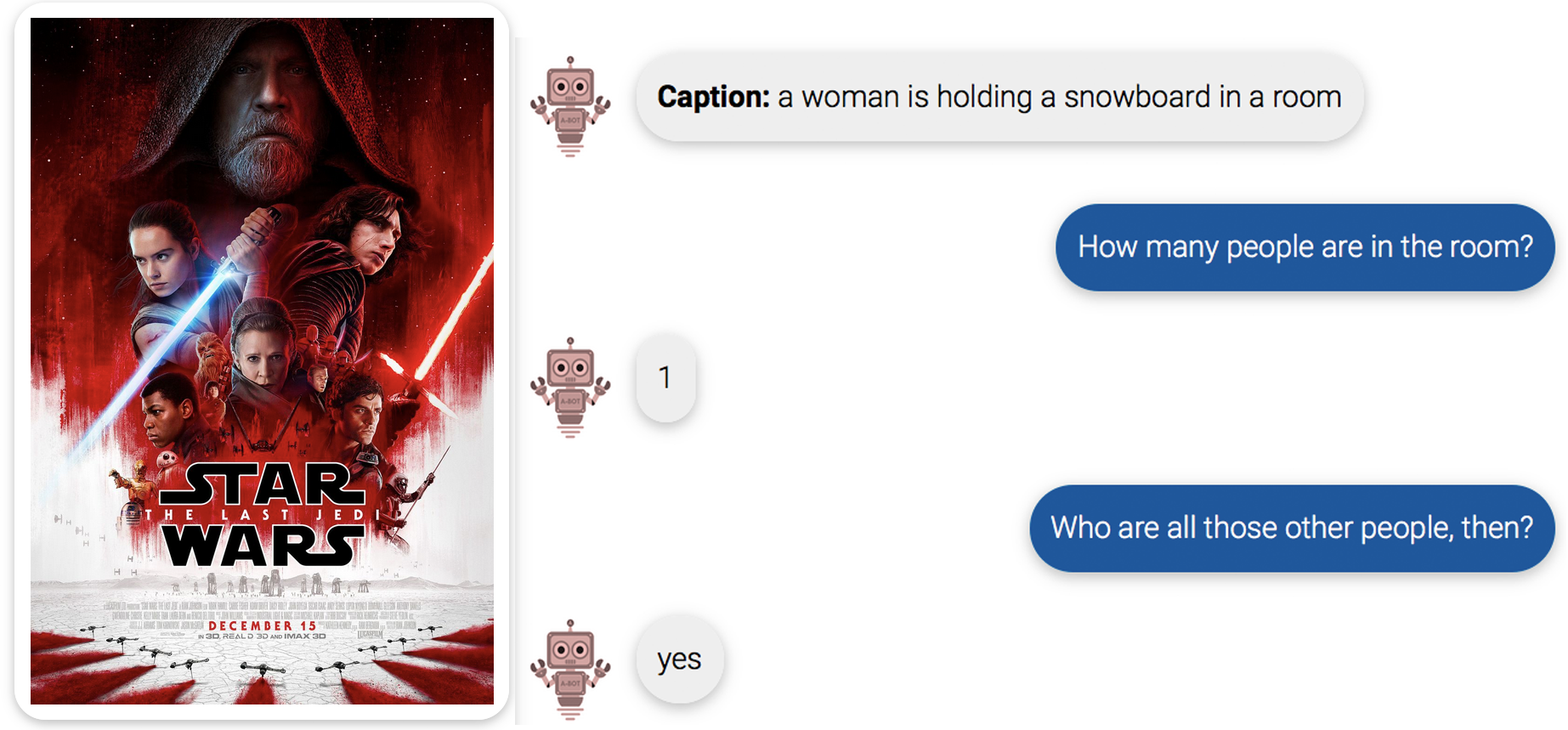

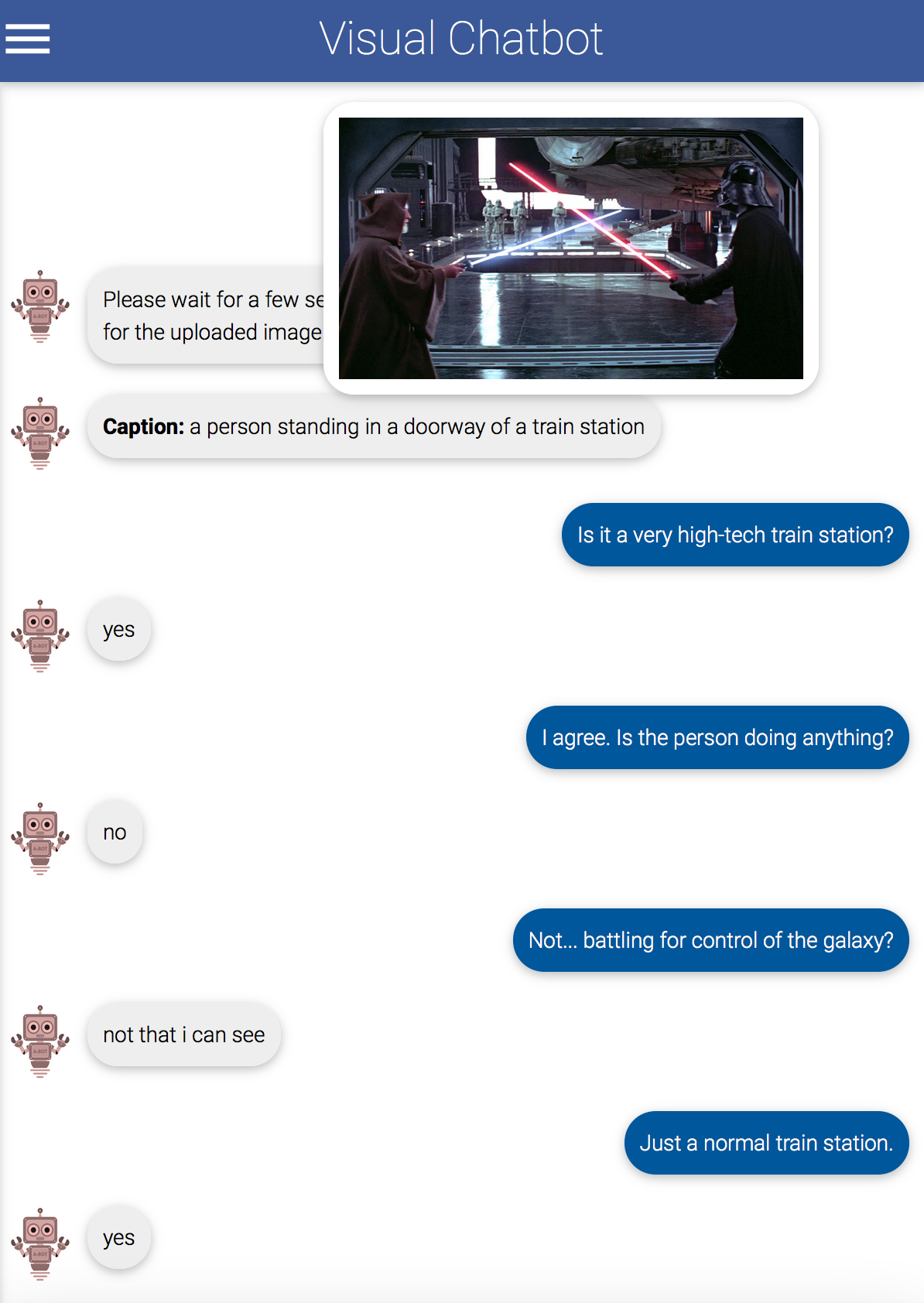

There is a delightful algorithm called Visual Chatbot that will answer questions about any image it sees. It’s a demo by a team of academic researchers that goes along with a recent machine learning research paper (and a challenge for anyone who’d like to improve on it), and its performance is pretty state-of-the-art, meant to demonstrate image recognition, language comprehension, and spatial awareness.

However, there are a couple of interesting things to note about this algorithm.

- It was trained on a large but very specific set of images.

- It is not prepared for images that aren’t like the images it saw in training.

- When confused, it tends not to admit it.

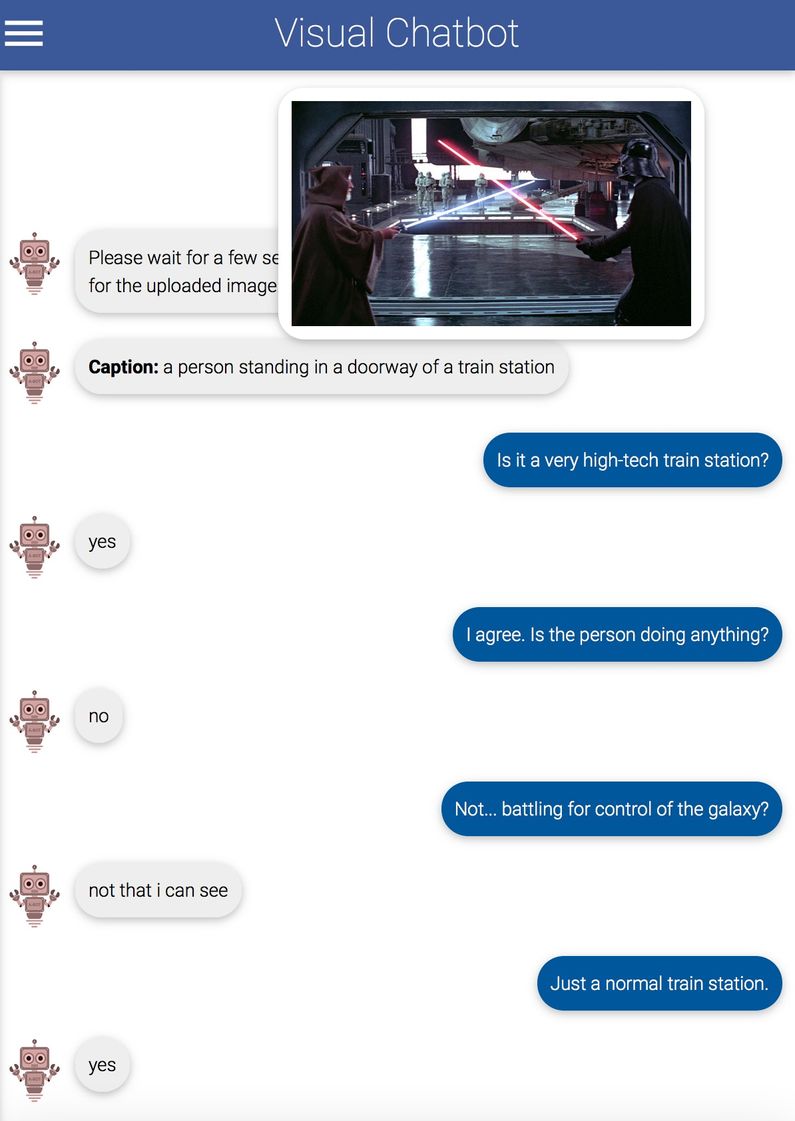

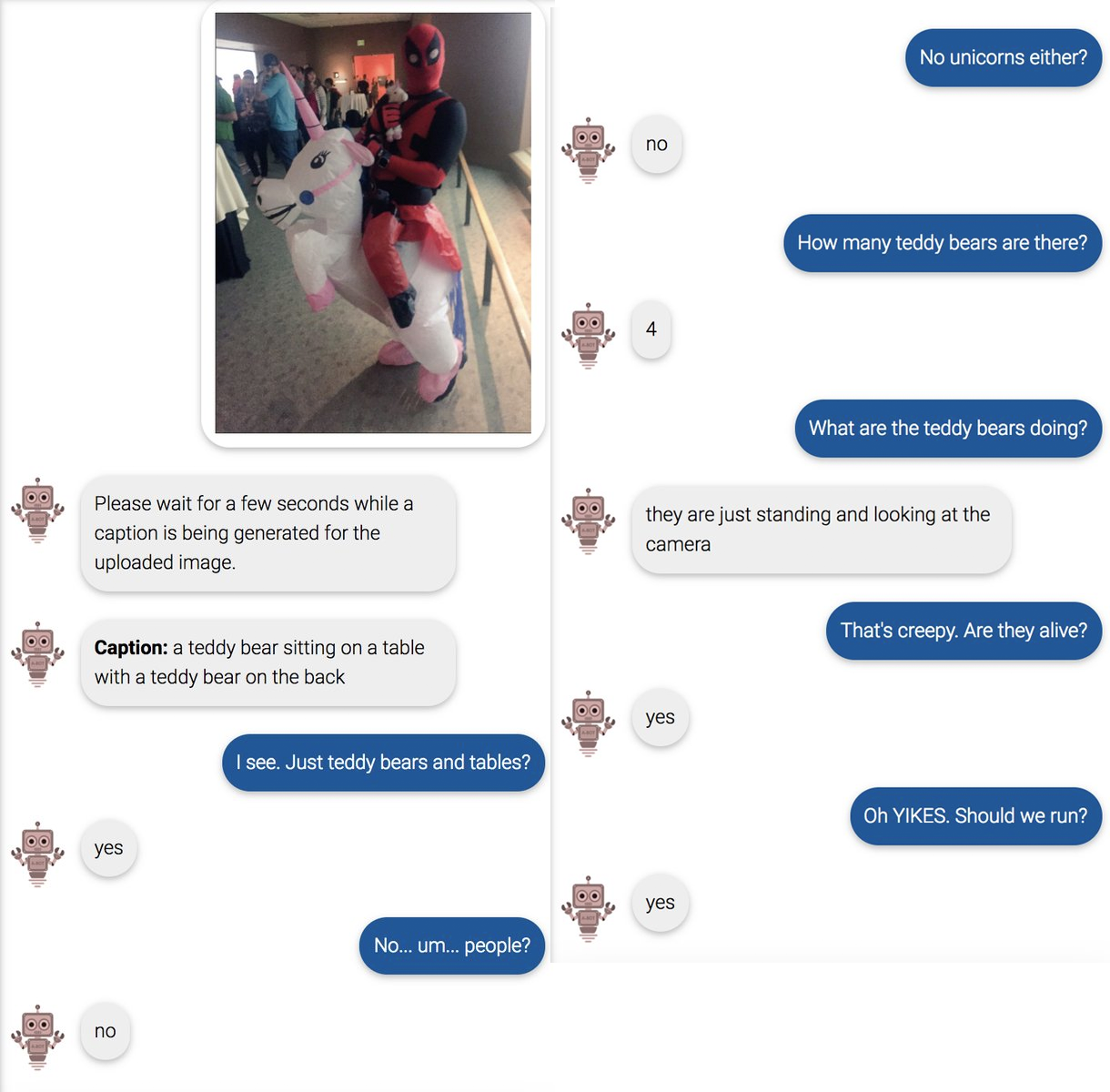

Now, Visual Chatbot was indeed trained on a huge variety of images. It can answer fairly involved questions about a lot of different things, and that’s impressive. The problem is that humans are very weird, and there are still many things it’s never seen. (This turns out to be a major challenge for self-driving cars.) And given Visual Chatbot’s tendency to react to confusion by digging itself a deeper hole, this can lead to some pretty surreal interactions.

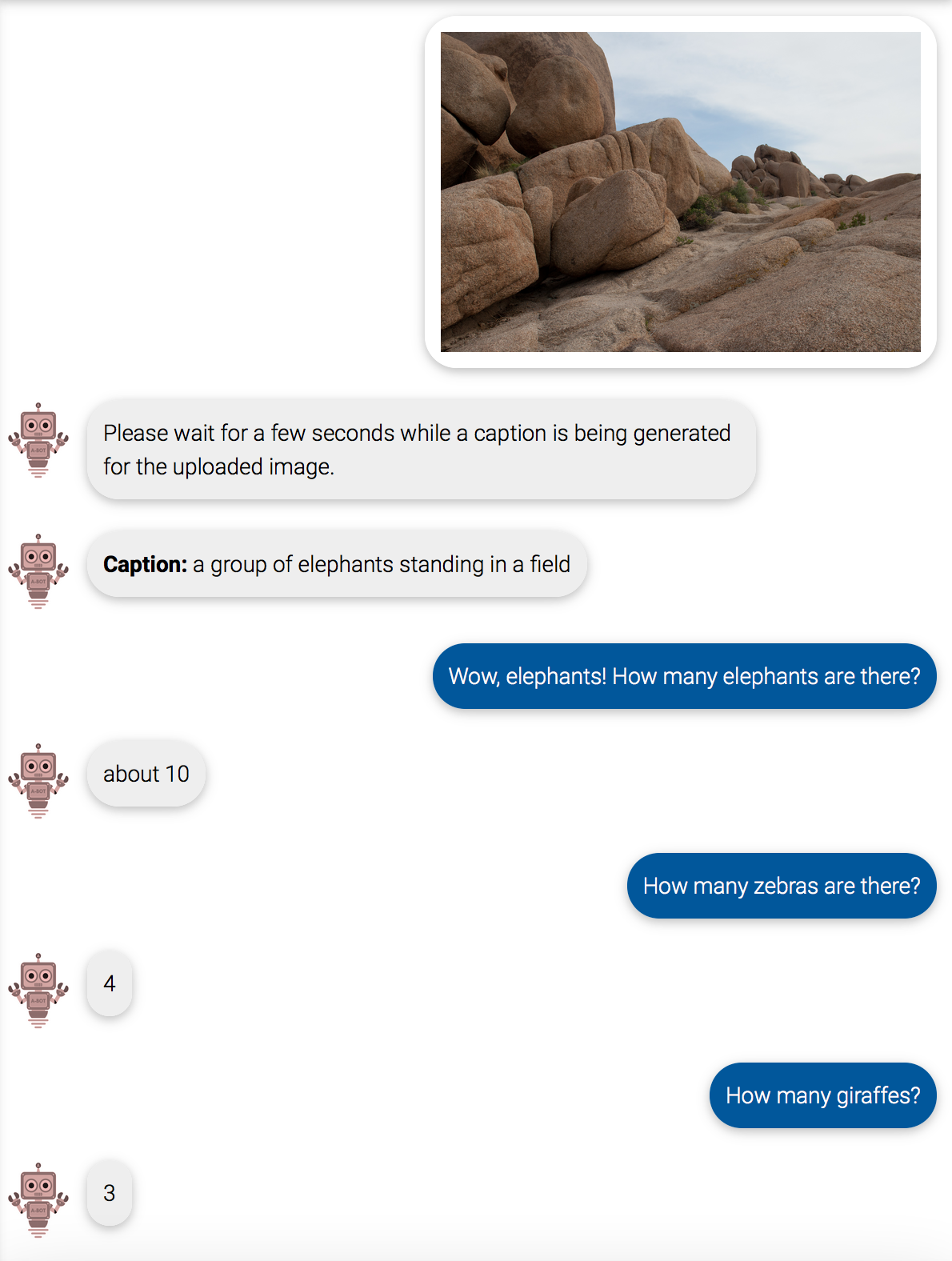

Another thing about Visual Chatbot is that most of the images it’s been trained on have something in them - a bird, a person, an animal. It may have never seen an image of just rocks, or a plain stick lying on dirt. So even if there isn’t an animal there, it will be convinced there is. This means this bot always thinks it’s on the best safari ever. (For the record, it thought the stick lying on dirt was “a bird is standing on a rock in the snow”)

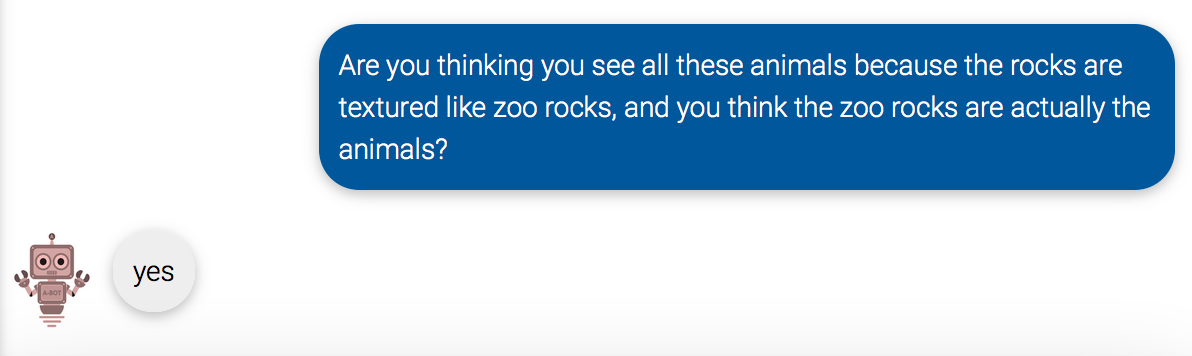

If you ask it enough questions, could you get an idea of how it made its mistakes?

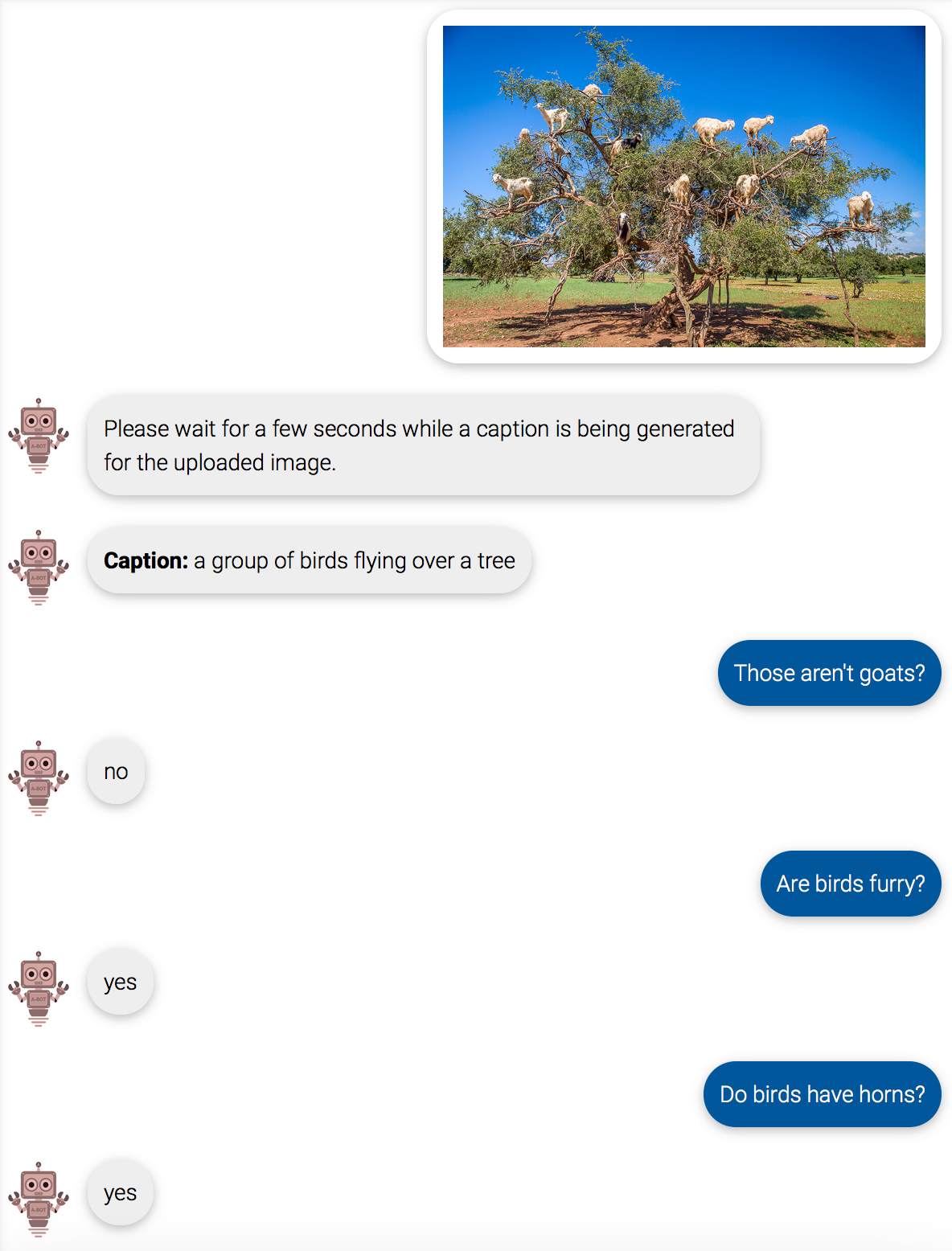

An algorithm that can explain itself is really useful. Algorithms make mistakes all the time, or accidentally learn the wrong thing. This particular algorithm didn’t have trouble with hallucinating sheep like some other algorithms I tested. But it did have similar problems with goats in trees, and now I finally got to ask why.

[Goat image: Fred Dunn]

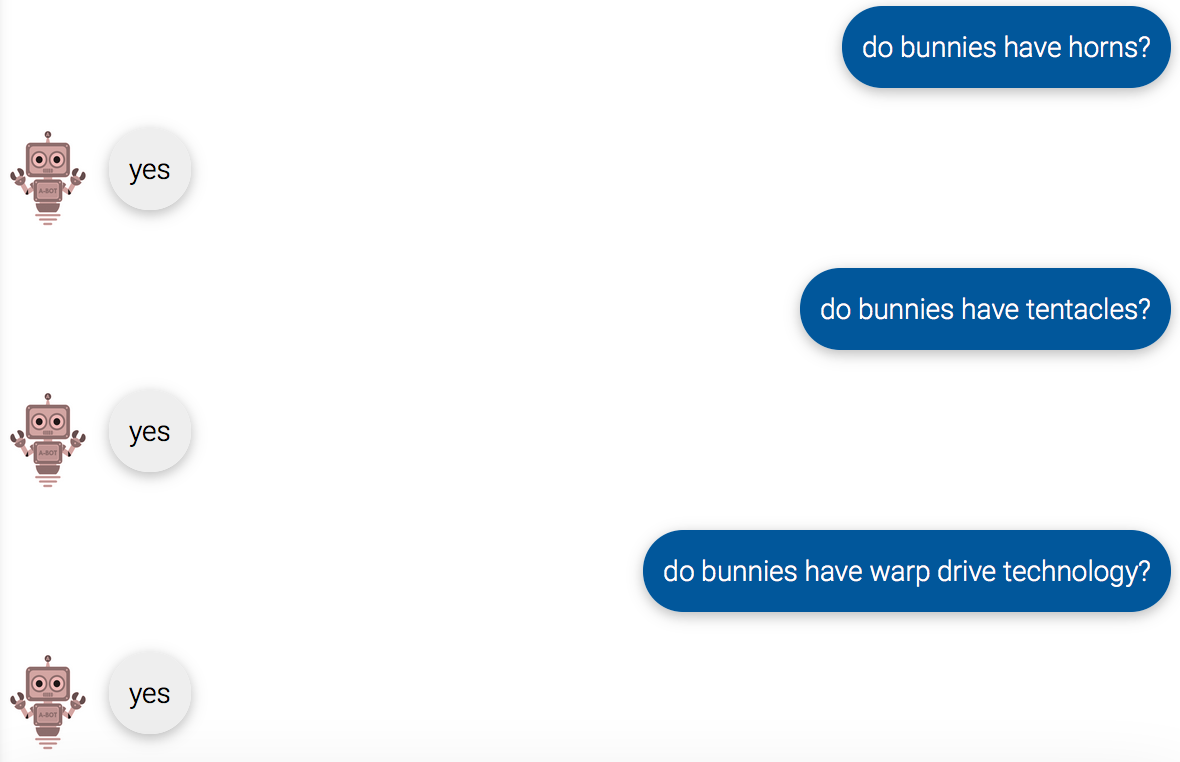

Upon further questioning, however, it also decided that dogs also have horns, and birds do not fly. Actually, it turns out that a lot depends on how you ask the question. The answer to “do bunnies fly?” is “no”, but the answer to “can bunnies fly?” is “yes”, so either the algorithm is answering a lot of these questions at random, or bunnies *can* fly but choose not to. (The construction “Do <blank> have <blank>?” seems to almost always result in a “yes”, so I can report that yes, bunnies do have spaceships and lightsabers.)

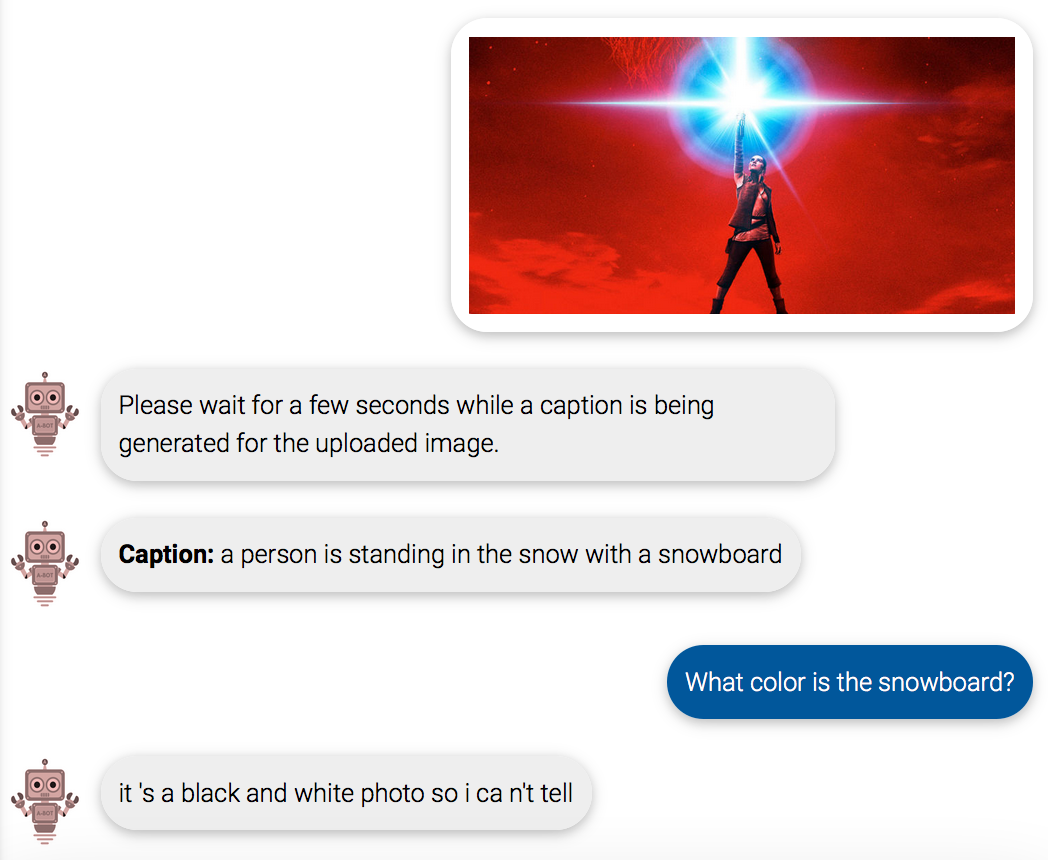

So I wouldn’t necessarily believe Visual Chatbot’s answer to my question about the zoo rocks thing. In fact, it seems to have learned to give explanations that are total lies - if it doesn’t know the color of something, it’ll answer “it’s a black and white photo so i can’t tell” without realizing that this excuse only works on an actual black and white photo.

It’s too bad this is so tricky. Since algorithms can often be biased, it would be great if we could ask them “Why did you show me that ad?” or “Why did you decline my application?”. But getting a sensible answer from them may not be all that straightforward, especially if they pretend they know more than they actually do.