The art of asking nicely

There are upsides to working with a neural net that trained on a huge collection of internet images and text. One is that, instead of ominous grey geometric blobs when it doesn't understand your prompt (there is a free interactive demo of AttnGAN here and it is a lot of fun), a huge neural net like CLIP can follow a wide array of prompts. Since CLIP technically only judges how well a picture matches a prompt, people have developed a few ways of using CLIP's judgements to aim an image generator. I've used this to generate such useful things as Frodo Baggins delivering pizza through the Mines of Moria, cursed candy hearts, fully illustrated sea shanties, and more fully illustrated sea shanties.

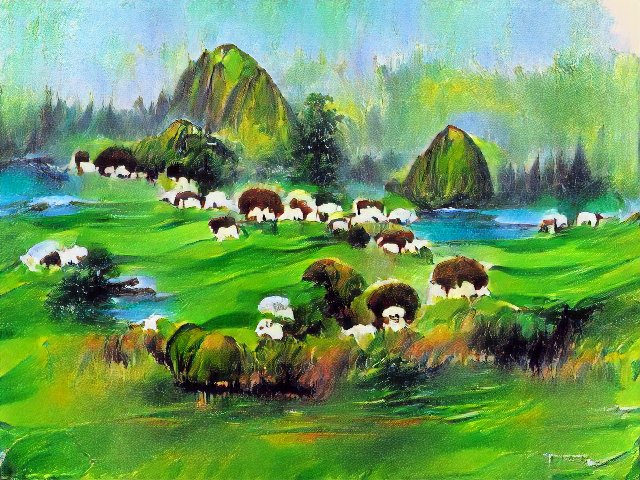

I decided to give the VQGAN + CLIP method of steering a try (tutorial and link here), and for its first task I decided to have it generate a subject that's given neural nets trouble in the past: "a herd of sheep grazing on a lush green hillside".

Here's what it generated after 450 iterations:

It could be better. The sheep are not so much grazing as embedded like weird molars, and the hillside isn't very picturesque.

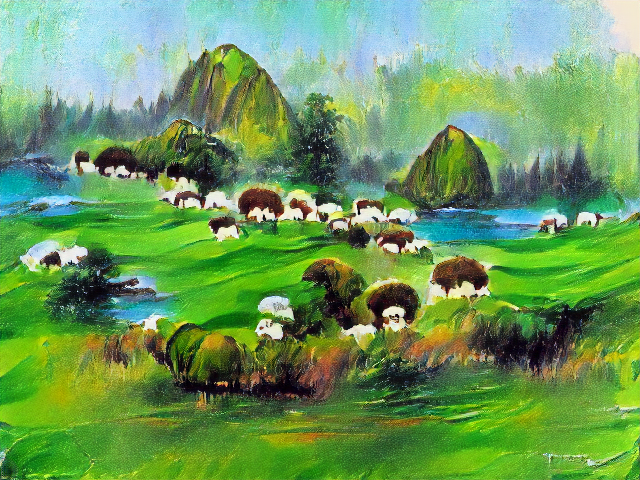

But this version of VQGAN+CLIP allows me to upload an image as a starting point. So I decided to start with an image that a different neural net had captioned as "a herd of sheep grazing on a lush green hillside". In fact, Azure image description had still called it "a herd of sheep grazing on a lush green hillside" even after I had removed all the sheep. With the image on the right as a starting point, would VQGAN simply add the sheep?

The answer is: sorta?

Can't really tell whether those are sheep or cauliflower. And did it add palm trees? The original image's composition is destroyed and what is left looks pretty flat.

Here is where it gets interesting. If I use the same prompt and add "Amazing awesome and epic", the picture gets noticeably better. "Oh," goes the neural net, "you wanted a GOOD picture".

And how good a picture you get depends on exactly how you ask for it. There are several phrases you can add that seem to make things better, like "trending on artstation" or "unreal engine" (a fancy new video game rendering engine).

Here's "a painting of a herd of sheep grazing on a lush green hillside in the style of disney trending on artstation | unreal engine" (prompt combo borrowed from here).

Granted the sheep are more like multicolored bundles of cloth, but the saturation and vignetting got much more dramatic. There's even a soft focus effect in the background. All the time it was giving me the flat, lackluster landscape of the first picture, the AI was perfectly capable of giving me this instead.

So what are some other ways of asking CLIP-VQGAN to try harder?

The results from "Fine art print" reminds me of unicorn calendars from the 80s or something. The sheep are sort of bubbling out from the hillside.

I tried to specify particular artists. Bob Ross was a hilarious mistake.

The Tim Burton version was very cool-looking, if completely unrecognizable as sheep.

"Award winning National Geographic Photography" gave me nice looking background cliffs and trees - and sheep that look disturbingly like people crawling around under green blankets.

But the most effective prompt? In terms of producing a realistic but dramatically lit landscape with recognizable mountains and hills and (okay not sheep)?

You're going to hate it. I hate it, and I'm the one who thought of it. But it's the natural extension of layering on descriptors to try to boost performance.

"dramatic atmospheric ultra high definition free desktop wallpaper"

With that cursed prompt as a base, I could layer more styles on top. I am ashamed to admit that "a herd of sheep grazing on a lush green hillside | dramatic atmospheric ultra high definition free desktop wallpaper | cubist cezanne" looks pretty cool.

The most haunted prompt turned out to be "a herd of sheep grazing on a lush green hillside | dramatic atmospheric ultra high definition free desktop wallpaper by Lisa Frank". I'm not sure what kind of rainbow apocalypse is happening here, but I wouldn't recommend poking at the violet shimmery patches that are oozing into the lake. Maybe those are the sheep.

For a more technical description of how CLIP steering works, and some gorgeous image examples, check out this blog post by Charlie Snell.

You can try CLIP+VQGAN for free by following the instructions in this tutorial (no coding or Spanish language skills necessary.) Or if you ask nicely, the @images_ai twitter account may try a prompt you request.

I generated way more images than will fit here, so I'll post a bunch more of them as bonus content. Become an AI Weirdness supporter to get bonus content! Or become a free subscriber to get new AI Weirdness posts in your inbox.