Fun with a neural net that transforms line drawings to cats

lewisandquark:

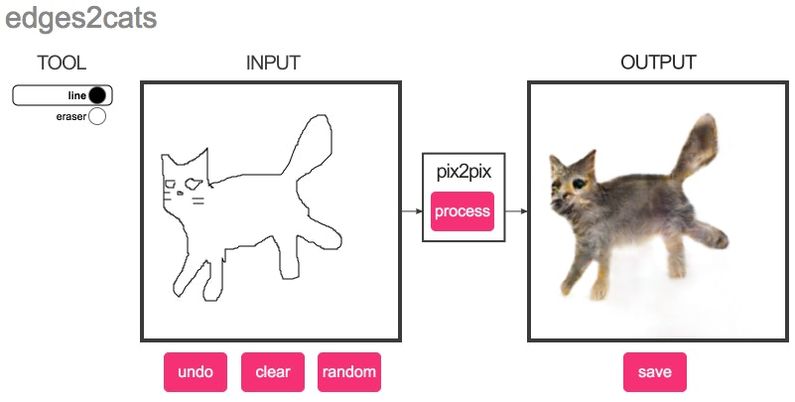

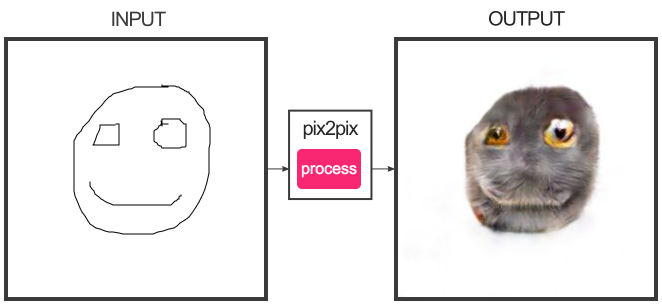

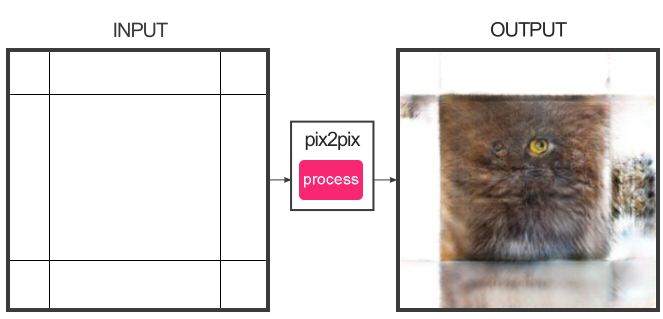

So there’s a neural network framework called pix2pix that can be trained to learn how to transform one type of image into another. It’s been used for example to convert satellite images into line drawings and vice versa (think Google maps satellite view vs the one that show boxes where all the buildings are). There’s also trained version of pix2pix that converts a block drawing to a building facade (although it tends to make the building facades into creepy post-apocalyptic burnt-out shells).

Less useful but arguably even more creepy is the version by Christopher Hesse that turns line drawings into cats.

He trained it on about 2000 stock photos of cats that he ran through an edge-detection filter - he used the edge-detected (line drawing) versions as the starting image, and the original image as the ending image. The neural network then learned to convert from the line drawings into the original cat photographs.

The result? A neural network that tries to make ANY line drawing into a photo of a cat. And, gloriously, you can play with it in your browser here. Here are some results I’ve produced.

Here, it was able to turn my primitive trackpad-drawn scribble into a furry cat, complete with fluffy underbelly. Not bad, neural network.

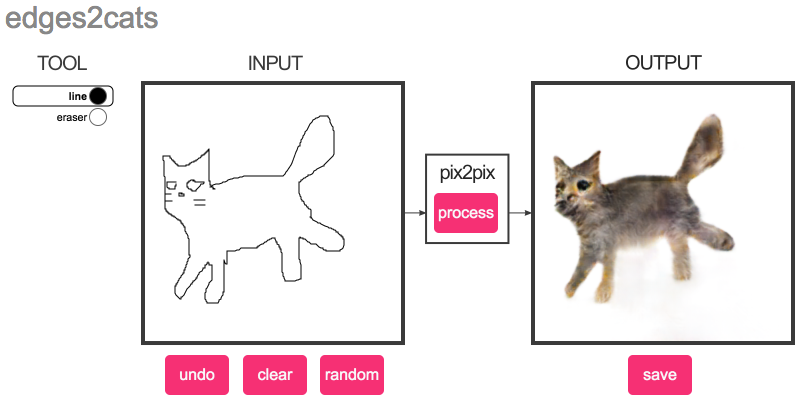

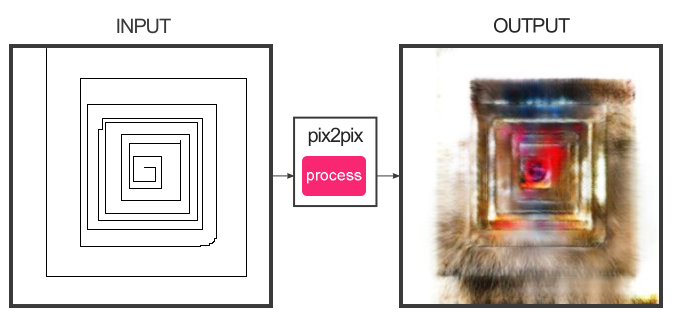

But, if required, the neural network can make do with less. The results get increasingly creepy, though.

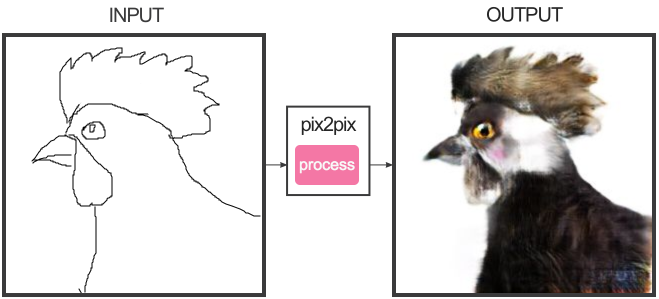

The neural network has not been trained on roosters. The network has only been trained on cats. 12/10 Excellent bright-eyed cat. Would stroke its strange furry head-crest.

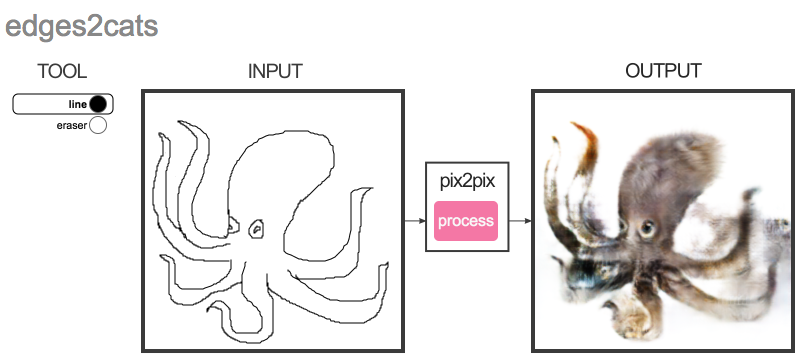

This is a strange grabby cat, but at least the neural network has identified the eyes.

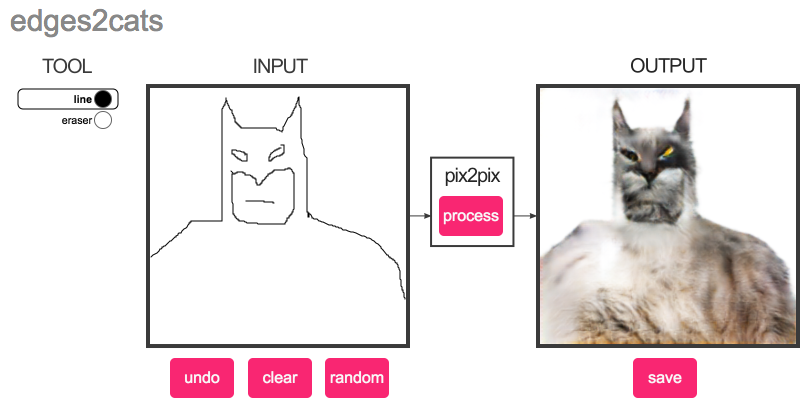

Ears? Check. Eyes? Check. Fluffy underbelly? Check. Next!

tried some of my own

uhhh…

WHAT HAVE YOU DONE

Subscribe now

Already have an account? Sign in