AI + Vintage American cooking: a combination that cannot be unseen

A week ago, in a sudden fit of terrible judgement, I decided to find out what would happen if I:

- Asked people to help me collect examples of the worst, the weirdest, the most gelatinous recipes that vintage American cooking has to offer, then

- Trained a neural net to imitate them

People submitted over 800 recipes in all, including such recipes as:

- “Beef Fudge” (contains marshmallow, chocolate chips, and ground beef),

- “Circus Peanut Jello Salad” (also contains crushed pineapple and kool-whip), and

- “Tropical Fruit Soup” (contains banana, grapes, and a can of cream of chicken soup)

- “Lemon Lime Salad” (also contains cottage cheese, mayonnaise, and horseradish)

As I watched this dataset coalesce, much as one might watch a speeding dumpster begin to spin out of control, I began to approach the state I dreaded: all the recipes began to seem normal.

Shrimp + grapefruit + lemon jello? Citrus seafood is a thing.

Chili sauce + lemon jello + cottage cheese + mayo? Well it’s not SWEETENED jello, so

I began to wonder if I would actually be able to tell the difference between the neural net recipes and the real thing. Jello was supposed to be easy-to-prepare, after all - maybe through repetition an advanced neural net like GPT-2 would learn how to make basic jello, and then anything it would decide to chuck in there would be technically reasonable. Maybe it would even coalesce on an ideal form, one that distilled human invention down to its essentials.

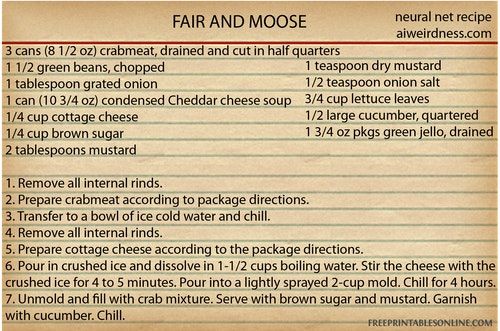

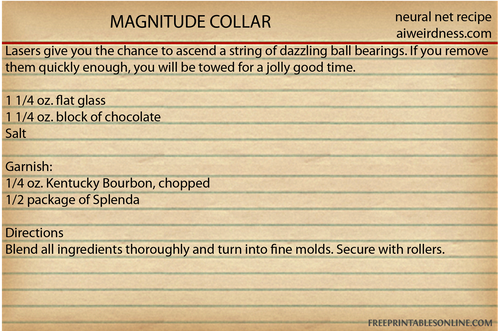

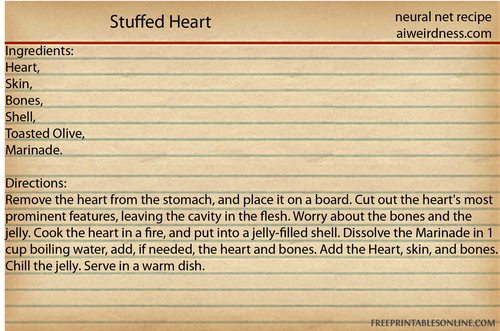

No, as it turns out. Here’s a neural net recipe.

It does cocktails, too.

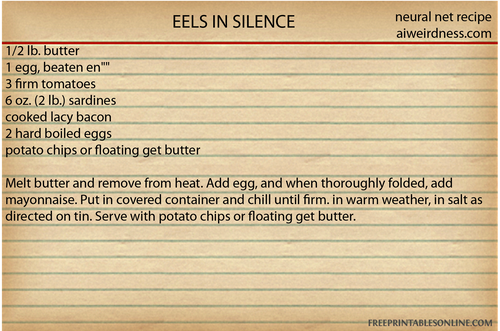

The training data contained a lot of things. It contained eel only once. For some reason the AI has decided to use eel a LOT.

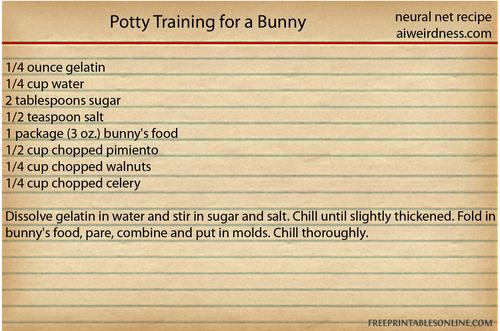

It also invents ingredients.

Some of the neural net recipes bear at least some resemblance to the human versions, but manage to mess them up profoundly. Without a sense that the recipe directions are describing ingredients and things that happen to them, the neural net never gets the hang of jello - that you need hot water to make it gel, that it doesn’t go in the oven. It also forgets to add all of its ingredients, or introduces some that were never mentioned before. This is partly because its memory is terrible, and partly because it doesn’t know what’s important.

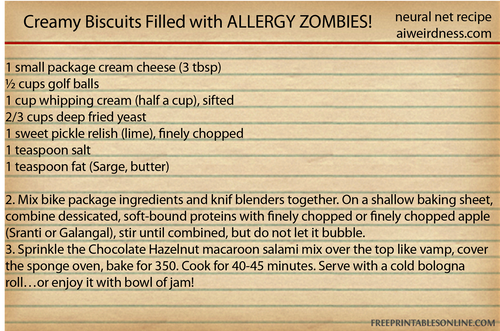

I couldn’t find a setting at which the neural net recipes could consistently pass for human. Set the chaos levels too low and the neural net would repeat the same few recipes, forgetting a different key step or ingredient each time. Set the chaos levels too high and the neural net would get ever more inventive, producing recipes that promised creamy lime and called for golf balls or elk hide, or directed the chef to remove the lamb’s giblets.

Some of its recipes were beyond bizarre.

Remember that today’s AI is much closer in brainpower to an earthworm than to a human. It can pattern-match but doesn’t understand what it’s doing. Commercial AI is not significantly smarter than this recipe AI. Humans have just hopefully done a better job of preventing it from making oblivious mistakes.

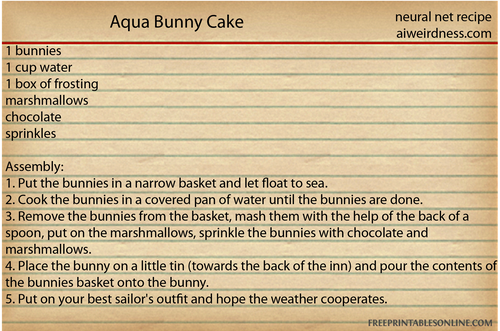

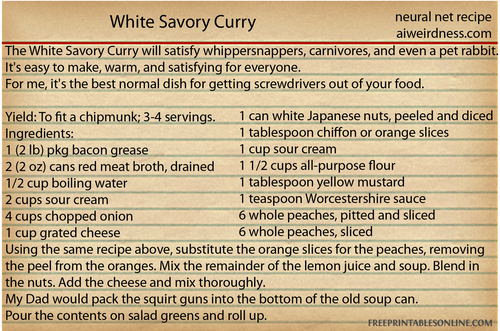

It got to the point where I would see a recipe like this and be excited and proud of the neural net. Then I would realize just how very low my standards had fallen.

One thing the neural net has learned from humans is that it’s good to include a story with your recipe.

It is bad at this.

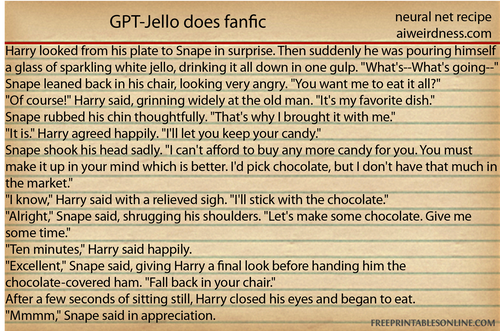

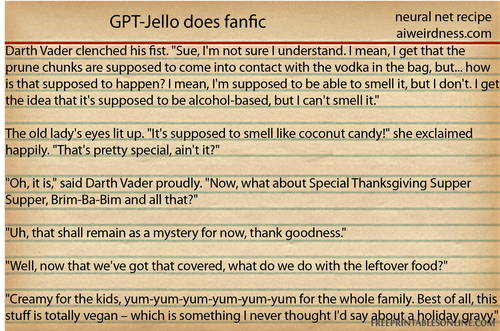

The neural net puts lots of words in its recipes that were never in the jello-centric training data. It’s drawing from its initial general training on internet text. It read a LOT of fanfic on the internet during its initial general training, and still remembers it now. Except now all its stories center around food.

It’s trying. It’s startlingly bad. It wants us to remove the internal rinds twice. AI’s not ready to take over the world - it can’t even figure out the kitchen.

AI Weirdness supporters get bonus content: More jello-centric neural net recipes, including some that were too long to post here. Be especially afraid of the ones that aren’t exactly “recipes”. (Or become a free subscriber to get new AI Weirdness posts in your inbox!)

My book on AI is out, and, you can now get it any of these several ways! Amazon - Barnes & Noble - Indiebound - Tattered Cover - Powell’s